“What is a good phishing failure rate?” Security leaders get asked this question constantly.

At first glance, the answer seems simple. Lower must be better.

But phishing failure rate is one of the most misunderstood metrics in security awareness. Benchmarks vary widely, simulation difficulty changes results, and a low click rate can sometimes hide real risk rather than reduce it.

A “good” phishing failure rate is not just a number. It depends on program maturity, user behavior, and what other metrics you track alongside it.

In this guide we’ll cover:

- Current industry phishing failure rate benchmarks

- Why comparing averages can be misleading

- Why failure rate must be paired with reporting rate

- What a strong awareness program actually looks like behaviorally

Because the real goal isn’t just lowering a number, it’s reducing human-driven security risk.

What is a phishing failure rate?

Phishing failure rate measures the percentage of users who interact with a simulated phishing email during a phishing test.

In most awareness programs, this usually means a user:

- Clicks a phishing link

- Downloads a malicious attachment

- Submits credentials on a fake login page

If any of these actions occur, the simulation counts it as a failure.

Simple definition

Phishing failure rate = (Number of users who interact with the phishing simulation)

÷ (Total number of users who received the simulation)

So the phishing failure rate for that campaign would be 8%.

What actions count as failure?

The definition varies slightly across platforms, but most programs count:

Some platforms also track severity levels, where credential submission is considered a more serious failure than simply clicking.

Why the definition matters

One reason phishing benchmarks can be misleading is that different organizations measure failure differently. Some programs count only credential submission, while others count any interaction at all.

That means two companies with the same “failure rate” may actually be measuring very different user behaviors.

What is a good phishing failure rate?

Most security leaders expect a clear benchmark for phishing failure rates.

The reality is that “good” depends heavily on program maturity. Organizations with no training typically see very high click rates, while mature security awareness programs reduce failures significantly over time.

Industry data from recent phishing simulation studies shows a wide range of results.

Typical ranges look like this:

These numbers give useful context, but they should be treated as rough benchmarks rather than strict targets.

A company reporting a 5% failure rate might actually have weaker security behavior than one reporting 8%, depending on:

- How realistic the phishing simulations are

- How failure is defined (click vs credential submission)

- How often employees are tested

- Whether employees actively report suspicious messages

This is why benchmarking failure rate alone can create a false sense of security.

For example, Hoxhunt data shows that with continuous behavior-focused phishing training, organizations often see failure rates fall dramatically over time - dropping from double-digit levels to roughly 3–4% within the first year while reporting behavior improves at the same time.

The chart below illustrates this behavioral improvement over time.

But even this kind of improvement doesn’t tell the full story. Failure rate alone doesn’t measure whether employees are actually detecting and reporting threats.

That’s where comparisons and benchmarks can become misleading.

Why comparing phishing failure rates alone is misleading

Benchmark numbers can be useful for context, but comparing phishing failure rates in isolation often leads to the wrong conclusions. Two organizations may report the same failure rate while having very different levels of real risk.

Several factors make direct comparisons unreliable…

Simulation difficulty varies widely

Phishing simulations are not standardized. Some programs send very obvious phishing emails designed mainly for awareness. Others run highly realistic simulations that closely mimic real attacks.

A company running easy simulations may report a 2–3% failure rate, while another running realistic scenarios may report 8–10% - even though the second program may actually be testing users more effectively. Without knowing simulation difficulty, benchmarks can be misleading.

Organizations define “failure” differently

Different programs count different actions as failures. Some organizations count only credential submission, while others count any link click. That means two companies reporting a 5% failure rate may actually be measuring different user behaviors.

Attack surfaces and employee roles differ

Failure rates also vary depending on:

- Industry threat exposure

- Employee roles and email volume

- Organizational size and complexity

- Frequency of phishing simulations

For example, finance teams and executive assistants typically face higher phishing exposure, which can influence simulation results.

The real risk question isn’t just “who clicked?”

A low failure rate might look good in a dashboard, but it doesn’t necessarily mean employees are actively detecting threats. The more important question is whether employees are recognizing suspicious emails and reporting them to security.

That’s why mature security awareness programs evaluate failure rate alongside other behavioral metrics, not as a standalone measure.

In practice, the most meaningful insight comes from looking at how failure rate changes alongside reporting behavior over time.

Benchmark traps that distort failure rates

Failure rate benchmarks are widely cited in security awareness reports, but they’re easy to misinterpret. Several common “benchmark traps” can distort the metric and make programs appear stronger or weaker than they actually are.

Easy simulations can artificially lower failure rates

If phishing simulations are too obvious, failure rates will drop quickly.

For example:

- Generic phishing templates

- Obvious spelling errors

- Suspicious sender domains

- Poorly designed landing pages

These types of simulations may produce a very low click rate, but they don’t reflect the types of attacks employees actually face.

Real phishing emails are often well-crafted, contextual, and targeted. If simulations don’t match that realism, the failure rate becomes a vanity metric rather than a meaningful risk signal.

Failure rate can be “optimized” without improving behavior

Some programs unintentionally optimize for a lower failure rate instead of stronger security behavior.

This can happen when teams:

- Reduce simulation difficulty

- Test employees less frequently

- Stop testing higher-risk groups

- Focus reporting on campaign-level results rather than behavioral trends

The result is a better-looking metric without meaningful improvement in real-world resilience.

Benchmarks rarely account for simulation frequency

Another hidden variable is how often employees are tested. Organizations that run frequent simulations tend to see higher failure rates initially because users encounter more scenarios.

Over time, however, this approach builds stronger detection habits, which leads to more reliable improvements in behavior. Programs that run only occasional phishing tests may report lower failure rates simply because users are exposed to fewer scenarios.

Benchmark comparisons ignore reporting behavior

Finally, most benchmark reports focus heavily on click rate while ignoring what happens after the email arrives.

But in real-world incidents, the most valuable employee action is often reporting the threat, not just avoiding the click. That’s why failure rate should never be evaluated alone.

To understand whether a program is actually improving security behavior, it needs to be interpreted alongside another critical metric: phishing reporting rate.

Why failure rate must be paired with reporting rate

A phishing failure rate only tells you who interacted with the simulated attack. It doesn’t tell you whether employees are actively helping detect threats.

That’s why mature security awareness programs evaluate failure rate alongside another critical metric: phishing reporting rate.

Reporting rate measures the percentage of phishing simulations that employees correctly identify and report to security.

- Failure rate: How many users interacted with the phishing email

- Reporting rate: How many users detected and reported the threat

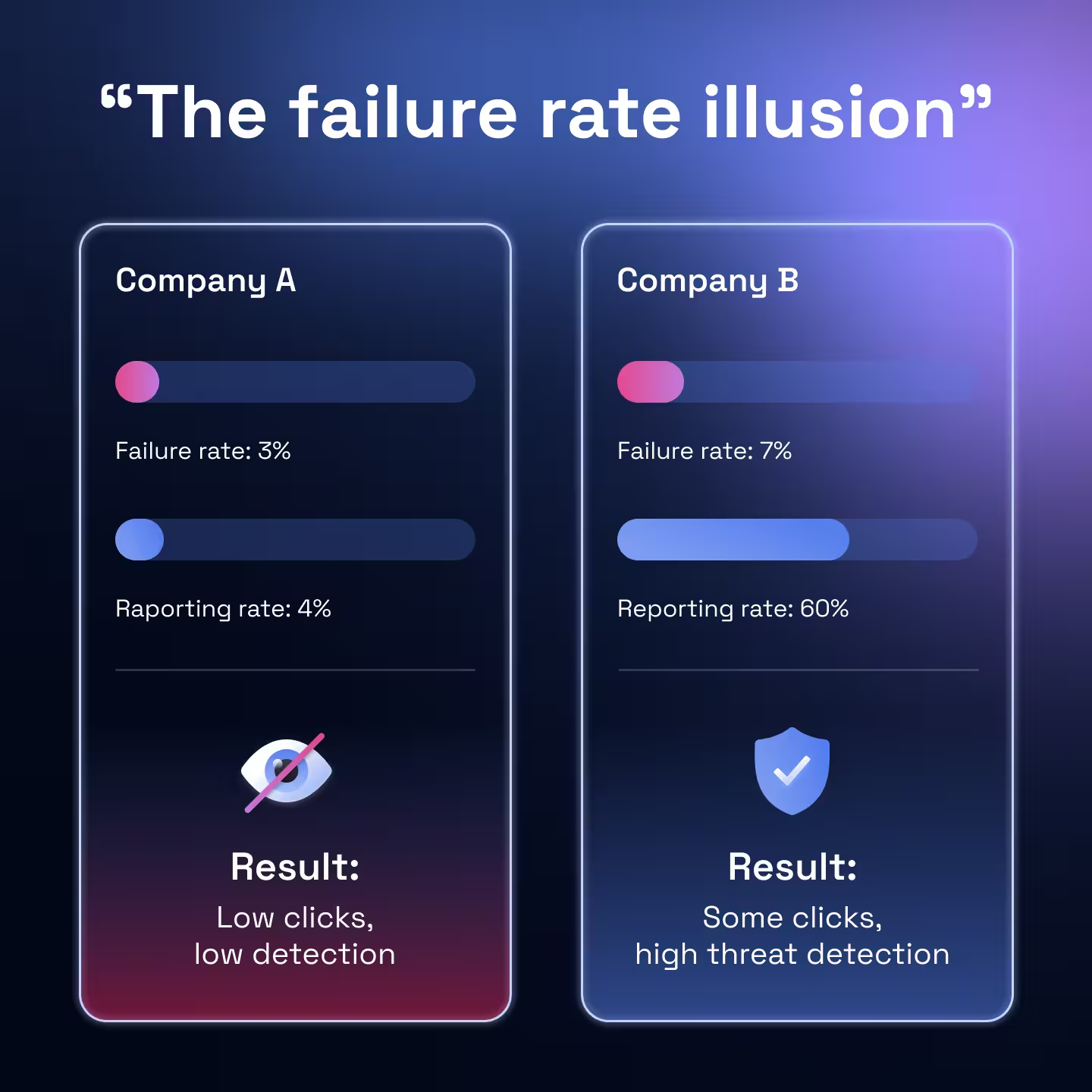

Looking at these metrics together gives a much clearer picture of security behavior.

For example:

At first glance, Program A appears stronger because it has the lower failure rate.

But from a security perspective, Program B is far more resilient.

When employees consistently report suspicious emails, they effectively become a distributed detection network for the security team.

A single reported phishing email can allow analysts to:

- Identify active campaigns early

- Remove malicious emails from other inboxes

- Prevent additional users from interacting with the attack

This behavior dramatically reduces the dwell time of phishing attacks inside an organization.

Failure rate still matters, but its real value comes from understanding it in combination with positive defensive behavior.

A strong awareness program therefore aims to achieve two outcomes simultaneously:

- Failure rates trend downward over time

- Reporting rates increase as employees learn to detect threats

When those two trends move together, it’s a strong signal that security awareness is translating into real-world behavior change.

What “good” actually looks like behaviorally

A good phishing failure rate isn’t just a number. It’s a byproduct of strong security behaviors across the organization.

When awareness programs are working well, you typically see a pattern emerge:

- Failure rates gradually decline over time

- Reporting rates increase

- Repeat clickers decrease

- Employees report suspicious emails faster

These behavioral signals matter more than hitting a specific benchmark. An organization that reduces its phishing failure rate from 18% to 7% over a year while simultaneously increasing reporting activity is showing clear evidence of improvement.

What’s happening in these environments is a shift in employee behavior.

Instead of treating suspicious emails as background noise, employees begin to:

- Pause before interacting with unexpected messages

- Look for common phishing signals (sender anomalies, urgency, credential prompts)

- Report suspicious emails rather than ignoring them

Over time, this creates a powerful effect. Employees start functioning as a distributed detection network, identifying threats early and feeding signals back to the security team. This behavioral shift is ultimately what security awareness programs are trying to achieve.

The goal isn’t simply to reduce clicks in a simulation, it’s to build a workforce that can recognize, question, and report suspicious activity in real-world situations.

When that behavior becomes consistent, failure rates tend to settle naturally into the low single digits - not because employees are avoiding tests, but because they’ve developed stronger security instincts.

How to interpret your phishing failure rate

Instead of focusing on a single “good” number, security leaders should evaluate phishing failure rate in context.

A strong awareness program typically shows three patterns over time:

When these trends appear together, it indicates that security awareness is changing real user behavior, not just improving test results.

For most organizations, mature programs eventually stabilize around failure rates in the low single digits but the exact number matters less than the direction of change.

A company moving from 18% → 8% → 4% over two years is demonstrating far stronger progress than one that has sat at 5% for years without behavioral improvement.

A good phishing failure rate is not just low… it’s part of a pattern where employees are increasingly recognizing and reporting suspicious messages. That’s the point where security awareness starts translating into real resilience against phishing attacks.

Next questions security leaders ask about security awareness

- Subscribe to All Things Human Risk to get a monthly round up of our latest content

- Request a demo for a customized walkthrough of Hoxhunt