Most security teams know this feeling.

The training is running, employees are completing the modules and phishing simulations are going out. On paper, it can seem like the organization is doing exactly what it’s supposed to do.

But the risk doesn’t feel lower. Incidents still happen, the same types of mistakes keep showing up and when someone asks whether the program is actually reducing human risk, the answer starts to feel less clear than it should.

That’s the tension at the center of many security awareness programs: activity is visible, but risk reduction is harder to prove.

In this guide, we’ll look at why that happens - how low fail rates can hide untested users, how averages can mask concentrated exposure, why completion metrics often create false comfort, and what changes when you start measuring human risk instead of just training activity.

The activity comfort illusion

One of the most misleading things about security awareness training is that it can create a strong sense of progress before it creates much real risk reduction.

That’s because training activity is highly visible.

You can see:

- Completion rates

- Phishing campaigns sent

- Policies acknowledged

- Quiz scores reported

- Dashboards filling up with data

All of that makes the program feel active, organized, and under control. And these things do matter - if no training is happening at all, that is a problem. Baseline awareness, policy education, and phishing simulations all have a role to play.

But activity is not the same as risk reduction. A program can be busy without being especially effective. Employees can complete training without changing behavior and a phishing campaign can run on schedule without telling you much about real-world exposure.

This is where many teams get stuck. They are running the training, meeting the compliance requirements, and keeping the program moving but underneath that activity, the signals that matter most (detection, reporting, speed, and reduction in risky behavior) may not be improving at the same rate.

That’s the activity comfort illusion… it gives organizations a reassuring sense that risk is being managed because training is happening. But in practice, the program may only be proving that employees were exposed to security content, not that the organization is becoming materially safer.

When that happens, the issue usually isn’t effort. It’s that the program is measuring participation in awareness, not exposure to risk.

When training activity gets mistaken for risk reduction

Security awareness training often feels effective because it produces visible outputs, but those outputs don’t necessarily reflect reduced human risk.

Most programs are built around a clear set of activities like annual or quarterly training modules, phishing simulations, policy acknowledgements etc. These are easy to measure, easy to report, and easy to justify to leadership. So when those numbers look good it’s natural to assume the program is working.

But this is where the disconnect starts…

- Completion rates tell you that employees saw the training, they don’t tell you whether employees changed how they behave.

- A declining click rate tells you fewer people interacted with a simulation, but it doesn’t tell you whether employees are detecting real threats or just avoiding obvious test emails.

And phishing simulations themselves can reinforce this illusion. If they are predictable, infrequent, or too easy, they become something employees learn to “pass” - not something that builds real detection skills.

Training activity is often used as a proxy for risk reduction because it’s measurable - not because it’s meaningful.

Over time, this creates a subtle but important shift in how programs are run:

- Success becomes high completion, not behavior change

- Simulations become manageable, not realistic

- Metrics become reportable, not actionable

The result is a program that looks healthy on a dashboard, but doesn’t necessarily reduce the likelihood or impact of a real attack. Even when the metrics look strong, they can still be hiding large areas of unmeasured risk.

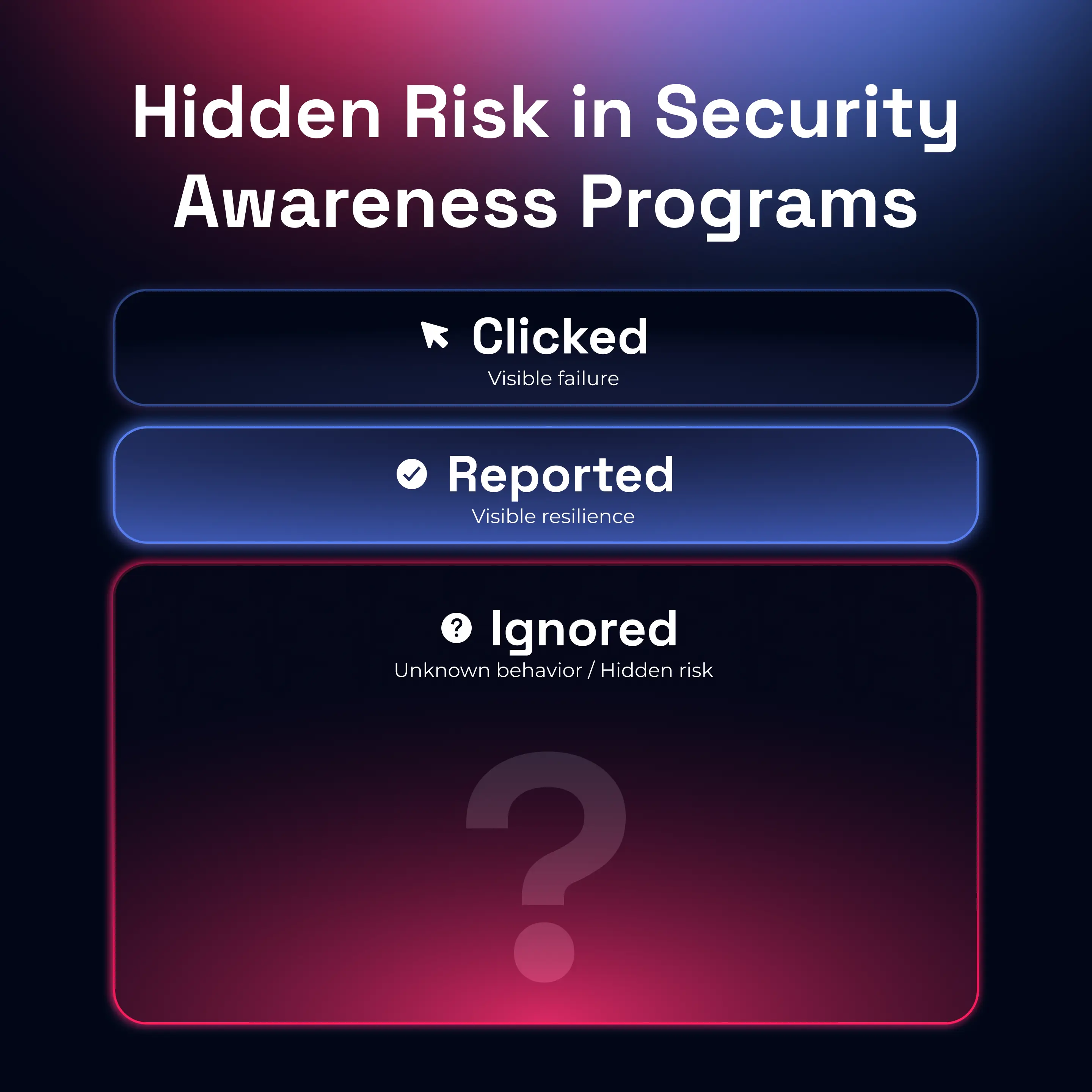

Low fail rates can hide a large untested population

A low phishing failure rate often looks like success but in many programs, it simply means large parts of the workforce were never truly tested.

Most dashboards highlight the same metric: click rate.

If it’s going down, that’s usually taken as a sign that risk is decreasing.

But in many awareness programs, a significant portion of employees do nothing when they receive a phishing simulation. They don’t click, but they also don’t report. They simply ignore it.

This creates what is often called a “miss rate” - the percentage of users who neither interact nor report.

And in some programs, that number can be surprisingly high.

- Employees who ignore emails are counted as “safe”

- Users who aren’t regularly tested never appear in results

- New hires or low-frequency users may never be meaningfully assessed

So the failure rate drops but not necessarily because behavior improved.

It drops because the measurement is incomplete, and this is where the metric becomes misleading.

A program might report a 4% failure rate, which sounds strong. But if 60-70% of users are effectively untested, that number represents only a small slice of real exposure.

And there’s another layer to this. When simulations are predictable or low difficulty, employees can learn to avoid them without actually improving their detection skills. They recognize the pattern, not the threat.

A low fail rate only tells you about visible failures, it doesn’t tell you how much risk is still hidden. To understand that, you need to look beyond clicks and start asking what’s happening across the entire population, including the users who aren’t engaging at all.

Because that’s where risk often sits quietly, unmeasured and unaddressed.

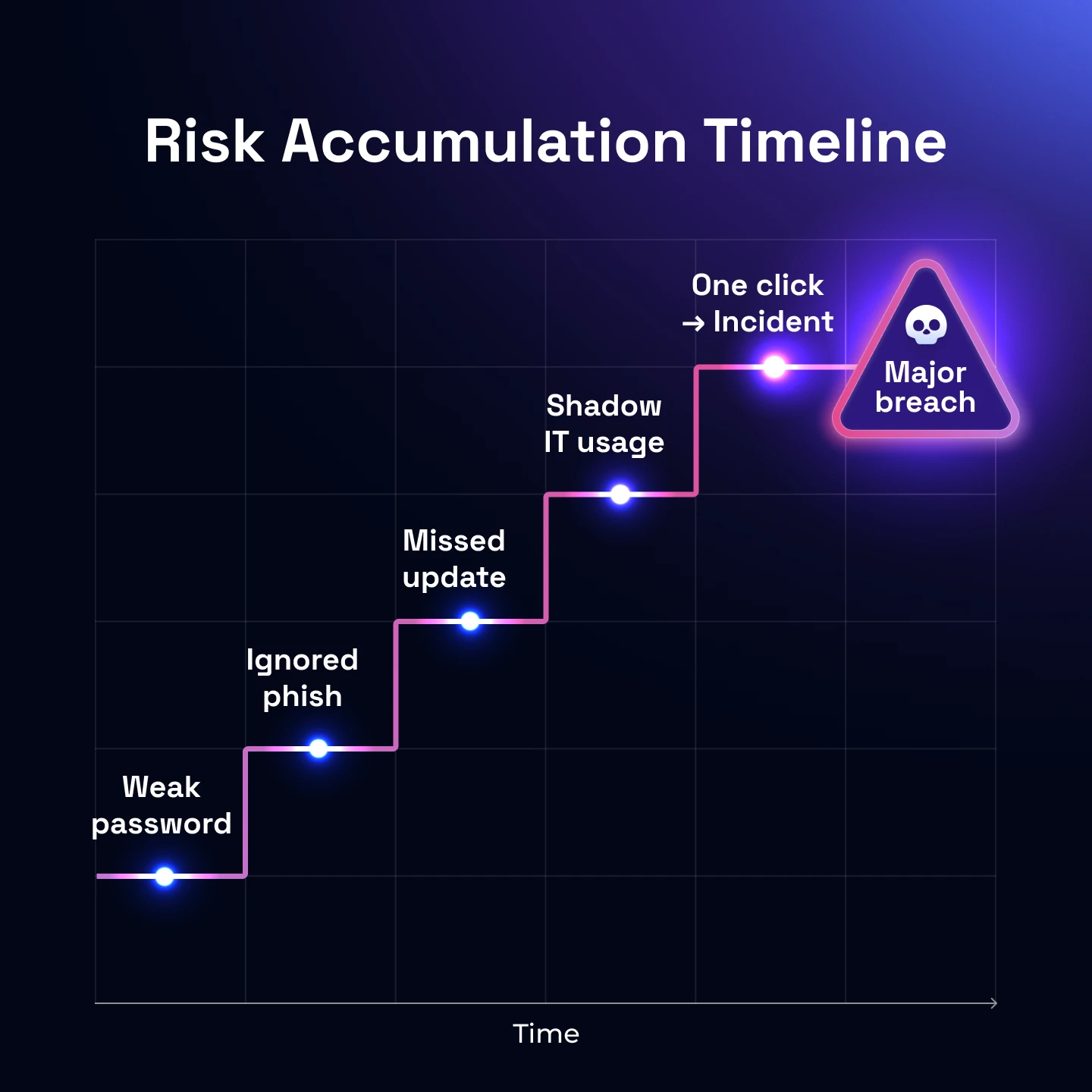

How risk accumulates quietly even when training is in place

Even when security awareness training is active, risk can continue to grow beneath the surface. This happens when knowledge is delivered, but not reinforced, measured, or translated into behavior. Over time, small gaps between what employees know and what they actually do begin to compound into real exposure.

The gap between knowledge and behavior widens over time

In early training phases, employees learn what phishing looks like and how to respond. But without continuous reinforcement, that knowledge fades quickly.

- Up to 50–70% of new information is forgotten within days without repetition

- Employees may recognize threats conceptually but still act on impulse in real situations

This creates a dangerous pattern: awareness exists, but decision-making under pressure doesn’t improve. People don’t fail because they don’t know… they fail because behavior hasn’t changed.

Small risky behaviors compound across the organization

Risk rarely comes from one major mistake. It builds through thousands of small actions:

- Clicking without verifying context

- Ignoring suspicious emails instead of reporting

- Reusing passwords or bypassing controls

- Trusting internal-looking messages too quickly

Individually, these actions seem minor. At scale, they create what is effectively “death by a thousand clicks.” This is why organizations can run training consistently and still experience incidents.

Training frequency doesn’t match threat evolution

Attackers evolve continuously, most training programs don’t.

- New tactics (AI-generated phishing, QR codes, thread hijacking) emerge rapidly

- Training content often updates annually or quarterly

- Simulations lag behind real-world attack sophistication

The result: employees are trained on yesterday’s threats while facing today’s attacks. That gap is where risk accumulates.

Psychological factors quietly undermine training

Even when employees complete training, human behavior introduces friction:

- Complacency: “I’ve done the training, I know this already”

- Cognitive overload: security decisions happen in busy workflows

- Fear of being wrong: “reduces reporting behavior

- Habit override: speed and convenience win over caution

These factors don’t show up in completion metrics, but they directly impact real-world outcomes.

The signal most programs miss

Perhaps the most important issue: risk accumulation is often invisible in dashboards.

You can have high completion rates and low failure rate… but still have growing exposure.

Because what’s missing is behavioral visibility:

- Who is consistently ignoring threats?

- Who is slow to report?

- Which roles are repeatedly exposed?

Without that layer, risk doesn’t disappear, it just goes unmeasured.

Training doesn’t reduce risk by default. It only reduces risk when it consistently changes how people behave in real situations. And if behavior isn’t changing, risk is still accumulating quietly.

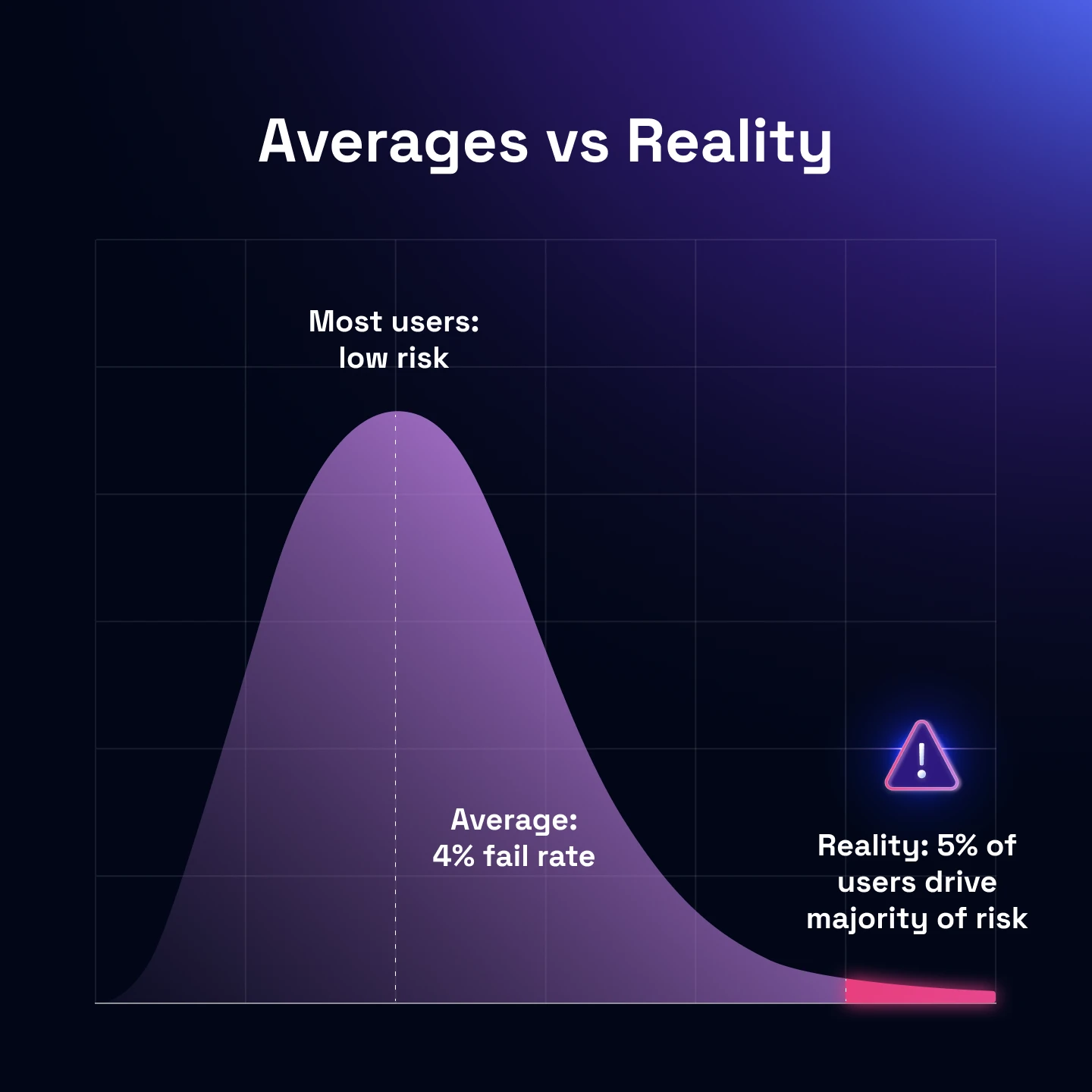

Why averages hide where your real risk actually sits

Most security awareness dashboards rely on averages - average failure rate, average reporting rate, average completion. On the surface, these look reassuring.

But averages don’t reduce risk, they hide where it concentrates.

Averages smooth over your highest-risk users

An organization might report:

- 5% phishing failure rate

- 85% training completion

- Strong reporting metrics overall

That sounds healthy but inside that average, you often find a small group of users repeatedly failing simulations, specific teams (finance, HR, exec support) exposed to more targeted attacks and individuals with privileged access behaving riskier than the average.

If 5% of users are consistently vulnerable (and they sit in high-impact roles) your actual risk is far higher than the average suggests. This is what averages conceal: risk is not evenly distributed.

Concentrated exposure is how real attacks succeed

Attackers don’t target your average employee.

They target:

- People with access to money (finance, procurement)

- People with access to systems (IT, engineering)

- People close to leadership (executive assistants)

These groups receive more sophisticated attacks, experience higher email volume and are usually under more time pressure. This means they are more likely to be exploited, even in “well-performing” organizations.

So while your overall metrics look stable, risk is quietly clustering in the exact places attackers care about most.

Why “good averages” create false confidence

Averages create a narrative that most people are doing the right thing. But security doesn’t fail at the majority level, it fails at the weakest point with the highest impact.

This leads to a dangerous outcome:

- Teams stop investigating deeper

- High-risk users don’t get targeted intervention

- Programs optimize for overall metrics instead of real exposure

And risk continues to exist, just less visibly.

What to look at instead

To understand real human risk, you need to move beyond averages and look at distribution and concentration.

That means asking:

- Who are our repeat clickers?

- Which roles have the lowest reporting rates?

- Where does time-to-report lag the most?

- Which users never engage with simulations at all?

Completion metrics vs exposure metrics: why activity doesn’t equal risk reduction

One of the most common reasons risk doesn’t decrease - despite ongoing training - is that programs measure the wrong things.

Most dashboards are built around completion - training completion rates, quiz scores, time spent in modules. These are easy to track, easy to report, and useful for compliance, but they don’t tell you whether anything has actually changed.

Completion gives you certainty, behavior gives you truth

Completion metrics create a sense of control. You can point to high participation, strong pass rates, and consistent rollout. From a reporting perspective, everything looks healthy but behavior doesn’t follow the same rules.

An employee can complete every module, pass every quiz, and still click a phishing email under pressure. Why? Because the environment where behavior matters - busy inboxes, ambiguous messages, real-world context - is completely different from a training environment.

That’s why completion becomes a proxy for progress, rather than proof of it.

Exposure metrics show what actually happens

To understand whether risk is going down, you need to look at what happens when employees are exposed to threats. Do they report suspicious emails? Do they hesitate before clicking? Do they escalate quickly or ignore the signal altogether?

Metrics like reporting rate, failure rate, and time-to-report start to answer those questions. Not perfectly, but far more accurately than completion ever will.

They reflect behavior under real conditions and that’s the key difference: they measure response, not participation.

Why this creates the illusion of progress

When programs optimize for completion, they naturally improve completion. Training gets rolled out more efficiently, reminders get better and participation increases.

But none of that guarantees that employees are making better decisions in real scenarios.

So you end up with a familiar pattern:

- Completion rates climb

- Dashboards look stronger

- Risk stays flat

That’s not a failure of effort, it’s a mismatch between what’s being measured and what actually reduces risk.

The shift that changes outcomes

The programs that break out of this pattern stop treating training as the goal. Instead, they treat behavior as the outcome. They still track completion, but it becomes secondary.

The primary question is whether employees detecting and responding to threats more effectively over time?

That shift forces everything else to evolve - how training is delivered, how simulations are designed, and how success is defined. Because in the end, risk doesn’t go down when training is completed, it goes down when behavior changes.

Early signs your program is creating false confidence

Most teams don’t realise something is wrong because the signals they rely on still look positive - that’s what makes this stage dangerous.

Nothing is obviously broken but the program has stopped meaningfully reducing risk.

The feeling comes before the data

Security leaders usually notice this before they can prove it.

It shows up as questions like:

- “We’re doing everything right - why aren’t incidents dropping?”

- “Why do the same types of attacks still work?”

- “Why does this feel less effective than it should be?”

This is the earliest signal: intuition diverging from reporting. And it’s often accurate.

Progress becomes harder to explain

In the first year, improvements are obvious - click rates drop and engagement is high.

After that, progress becomes harder to articulate. You might still see movement in dashboards but it’s incremental, inconsistent, or difficult to connect to real outcomes.

That’s usually because the program has exhausted the easy wins. From that point on, improvement requires deeper behavioral change, not just more training.

The program starts running on inertia

Another sign is when the program continues but without clear direction.

- The same formats repeat

- The same assumptions hold

- The same structure persists year after year

At this stage, the program is no longer evolving, it’s operating by habit.

Success becomes defined by consistency, not impact

One subtle shift is how success gets framed internally.

Instead of asking: “Are we reducing risk?”

Teams start asking: “Did everything run as planned?”

Consistency replaces effectiveness. As long as training is delivered and metrics remain stable, the program is considered successful, even if underlying exposure hasn’t changed.

Why this stage matters

This is the point where most programs stall - not because they fail, but because they appear to be working well enough. That’s what makes it hard to challenge.

But if you stay here too long:

- Risk doesn’t decrease further

- Behavior stops improving

- The program becomes harder to evolve later

The key is recognising this phase for what it is: not failure but a ceiling. And ceilings don’t break with more of the same.

Why training alone can’t reduce human risk

Even when security awareness training is well-designed, well-delivered, and widely adopted, it still operates within a narrow model: it treats risk as a knowledge problem. But in practice, human risk behaves more like a systems problem.

Training is episodic, risk is continuous

Most awareness programs run on cycles:

- Annual training

- Quarterly refreshers

- Periodic simulations

But attacks don’t follow that schedule - they happen continuously, across different channels, with evolving tactics.

So even if training is effective at the moment it’s delivered, its influence fades between those moments while risk continues to accumulate.

The model assumes uniform risk, but exposure isn’t uniform

Traditional programs treat the workforce as a single group. Everyone receives similar training, similar simulations, similar expectations.

But in reality:

- Some roles are targeted far more heavily

- Some individuals interact with external emails all day

- Some users handle sensitive systems or financial processes

Risk isn’t evenly distributed, so a uniform training model will always leave gaps.

Training doesn’t create feedback loops

Another limitation is that training is often one-directional:

- Content is delivered

- Employees complete it

- Results are recorded

But behavior change requires feedback:

- What did the user do?

- How quickly did they respond?

- Did they improve over time?

Without that loop, the program can’t adapt. It becomes static while both user behavior and attacker tactics evolve.

The result: diminishing returns

This is why most programs see the same pattern:

- Strong early improvement

- Slower gains over time

- Eventually, little to no measurable change

Not because training stops working entirely but because the model reaches its limit.

At that point, adding more training produces smaller and smaller returns.

From training program to human risk system

Once you see the limitations clearly, the problem isn’t just that training needs improving... it’s that the model itself needs to change.

Most organizations run security awareness as a program - a set of activities delivered over time. But reducing human risk requires a system that continuously observes, measures, and influences behavior.

Programs deliver content, systems respond to behavior

A traditional program asks:

- Have employees completed training?

- Are simulations being sent?

- Are we hitting participation targets?

A human risk system asks different questions:

- How are employees actually responding to threats?

- Where is risk increasing or concentrating?

- What behavior needs to change next?

That shift moves the focus from delivery → response.

Risk becomes something you can see, not assume

In a program model, risk is inferred from activity:

Training completed → assumed improvement

Low failure rate → assumed resilience

In a system model, risk is directly observed: Who reports threats and who doesn’t? Who improves over time and who doesn’t? Where is detection fast and where does it lag?

This turns human risk from something abstract into something measurable and trackable over time.

Intervention becomes targeted, not generic

Once behavior is visible, intervention changes.

Instead of sending the same training to everyone and running identical simulations across the org, you can focus on high-risk roles and individuals, reinforce specific behaviors (e.g. reporting, verification) and adjust difficulty and exposure dynamically.

This is where improvement starts to become continuous, not periodic.

The goal shifts from awareness to response capability

Ultimately, this is the biggest change. Traditional programs aim to make employees aware of threats.

A human risk system aims to ensure employees can:

- Recognise threats in real conditions

- Take the right action consistently

- Respond quickly when it matters

Because that’s what actually reduces risk.

What to do next if risk isn’t going down

If you’ve made it this far, the issue usually isn’t effort.

You’re running training, you’re tracking metrics, you’re doing what most programs are designed to do.

But the signals don’t line up with outcomes. That’s the moment where incremental fixes stop working and the approach itself needs to shift.

Start by changing what you measure

Before changing tools or content, change the lens.

Move away from asking: “Did people complete training?”

And toward: “Are people detecting and responding better over time?”

This means prioritising:

- Reporting behaviour

- Time-to-report

- Repeat risk patterns

- Role-based exposure

Because what you measure determines what improves.

Make risk visible at the right level

Averages won’t help you here. To actually reduce risk, you need to see:

- Which individuals are consistently struggling

- Which roles are most exposed

- Where detection breaks down

This is where most programs unlock their first real insight. Not by adding more training, but by seeing risk clearly for the first time.

Shift from campaigns to continuous reinforcement

If training happens in bursts, behavior will too.

The goal is to keep security present in daily decision-making, not separate from it.

Focus effort where it actually matters

Not every user carries the same risk. Trying to improve everyone equally is inefficient and often ineffective.

Instead:

- Prioritise high-risk roles

- Support repeat offenders with targeted intervention

- Increase challenge where users are already strong

This is how programs move from broad awareness to targeted risk reduction.

Treat behavior as something that needs to be maintained

Even when things improve, they don’t stay that way automatically. Habits decay, threats evolve and context changes.

So the goal isn’t to “fix” human risk once, it’s to continuously manage and reduce it over time.

If risk isn’t going down, it’s rarely because nothing is happening. It’s because the program is optimised for activity, not outcomes.

And once you shift that focus from training delivered to behavior changed,

that’s when risk starts to move in the right direction.

Next questions security leaders ask about security awareness

- Subscribe to All Things Human Risk to get a monthly round up of our latest content

- Request a demo for a customized walkthrough of Hoxhunt

.jpg)